- Blog

- Drake album download itunes free

- Notorious big albums number one album

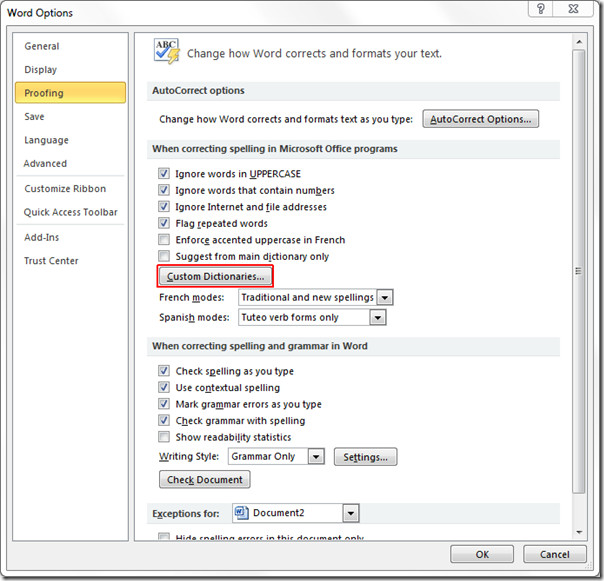

- Creating a custom dictionary in word

- Major payne full movie online

- Download gmail icon for shortcut

- Fl studio 6 free download

- Rosetta stone reviews german

- Outlook pst file converter

- How to test plc in rslogix 500 emulator

- Xilisoft video converter 3gp free download

- Reddit mac app

- Qualcomm atheros drivers speed

- Decipher text message cost

- Create a custom map with pins google

- Abc driving test novi

Word_tf = doc_freq/ len(nltk.word_tokenize(document)) The following script does that: word_idf_values = ĭoc_freq = 0 for word in nltk.word_tokenize(document): The next step is to find the IDF values for the most frequently occurring words in the corpus. Finally, we create a dictionary of word frequencies and then filter the top 200 most frequently occurring words. We then pre-process it to remove all the special characters and multiple empty spaces. In the above script, we first scrape the Wikipedia article on Natural Language Processing. Most_freq = argest( 200, wordfreq, key=wordfreq.get) # -*- coding: utf-8 -*- """Īrticle_html = bs.BeautifulSoup(raw_html, 'lxml')Īrticle_paragraphs = article_html.find_all( 'p')Īrticle_text = '' for para in article_paragraphs:Ĭorpus = nt_tokenize(article_text)Ĭorpus = re.sub( r'\W', ' ',corpus )Ĭorpus = re.sub( r'\s+', ' ',corpus ) The TF-IDF model will be built upon this code.

To understand how we create a sorted dictionary of word frequencies, please refer to my last article. TF-IDF Model from Scratch in PythonĪs explained in the theory section, the steps to create a sorted dictionary of word frequency is similar between bag of words and TF-IDF model.

In the implementation section, we will use the log function to calculate the final TF-IDF value. However, since we had only three sentences in our corpus, for the sake of simplicity we did not use log. In such case the formula of IDF becomes: IDF: log((Total number of sentences (documents))/(Number of sentences (documents) containing the word)) It is important to mention that to mitigate the effect of very rare and very common words on the corpus, the log of the IDF value can be calculated before multiplying it with the TF-IDF value. Note the use of the log function with TF-IDF. The values in the columns for sentence 1, 2, and 3 are corresponding TF-IDF vectors for each word in the respective sentences. Let's now find the TF-IDF values for all the words in each sentence. You can clearly see that the words that are rare have higher IDF values compared to the words that are more common. The following table contains IDF values for each table. We can calculate the IDF value for each word now. Wordįinally, we will filter the 8 most frequently occurring words.Īs I said earlier, since IDF values are calculated using the whole corpus. Next, let's sort the dictionary in the descending order of the frequency as shown in the following table. To find the TF-IDF value, we first need to create a dictionary of word frequencies as shown below: Word Since we have three documents and the word "play" occurs in all three of them, therefore the IDF value of the word "play" is 3/3 = 1.įinally, the TF-IDF values are calculated by multiplying TF values with their corresponding IDF values. Let's find the IDF frequency of the word "play". On the other hand, TF values of a word differ from document to document. It is important to mention that the IDF value for a word remains the same throughout all the documents as it depends upon the total number of documents. IDF: (Total number of sentences (documents))/(Number of sentences (documents) containing the word) IDF refers to inverse document frequency and can be calculated as follows: Its term frequency will be 0.20 since the word "play" occurs only once in the sentence and the total number of words in the sentence are 5, hence, 1/5 = 0.20. Once you have tokenized the sentences, the next step is to find the TF-IDF value for each word in the sentence.Īs discussed earlier, the TF value refers to term frequency and can be calculated as follows: TF = (Frequency of the word in the sentence) / (Total number of words in the sentence)įor instance, look at the word "play" in the first sentence. Like the bag of words, the first step to implement TF-IDF model, is tokenization. We will use the same three sentences as our example as we used in the bag of words model. Theory Behind TF-IDFīefore implementing TF-IDF scheme in Python, let's first study the theory. The idea behind the TF-IDF approach is that the words that are more common in one sentence and less common in other sentences should be given high weights. The words that are rare have more classifying power compared to the words that are common. For instance, the word "play" appears in all the three sentences, therefore this word is very common, on the other hand, the word "football" only appears in one sentence. One of the main problems associated with the bag of words model is that it assigns equal value to the words, irrespective of their importance.